In April 2025 I read up and studied prompt engineering as I wanted to get the most out of how I was using Artificial Intelligence (AI) and it led to the publishing of my blog post Unlock the Power of AI: Your Beginner’s Guide to Prompt Engineering. And while prompt engineering has a part to play, it is the minor partner to context engineering.

In short, context engineering provides the what, why and how information to the Large Language Model (LLM); the prompt is how you explain it.

A lesson I have learnt for myself is that Claude is giving me more personal responses as its context on me and my requirements has changed during the first few months of using Claude.

What is prompt engineering

Prompt engineering is the process of creating and optimising prompts for effective interactions with Large Language Models (LLMs) such as Claude or Gemini. Basically, it is the means by which you communicate your needs with the AI.

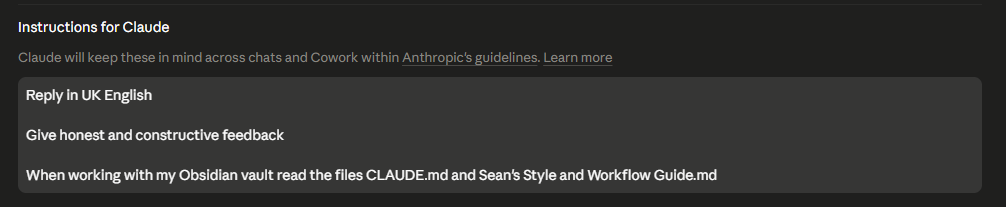

Many Large Language Models such as Claude also support system prompting, which allows you to define the big picture context for how you will be using the model. The screenshot below shows you the current system prompt I use with Claude.

Anthropic advise that the system prompt should be specific enough to guide behaviour but broad enough to give the model some freedom.

The Gap

One of the fundamental concepts to be aware of when using a Large Language Model is the concept of the context window. It contains all the information the Large Language Model can see. This is a finite resource with different models having different capacities measured by the number of tokens.

Any context you provide within this context window helps determine how the output will be shaped; otherwise the model will respond with a generic response as it has just predicted the most appropriate outcome.

That is why I specify in the system prompt that I want the output in GB English. I live in England and I would like to read any response using, as far as I’m concerned, the correct spelling. I want to read colour not color.

What is Context Engineering?

Context engineering is providing the model with the full context it requires to produce the results you require.

Context engineering includes the following:

- System prompting

- Tools

- External data

But for me, the most important piece of context you can provide is background information on what you are trying to achieve and why. This extra piece of information can make a huge difference as it influences the prediction machine that sits at the heart of the model to produce output that more closely matches your requirements.

That is why I’m experimenting with how I can best use AI with my Obsidian vault, which is the home of my Zettelkasten, journal and writing. What better source of context is there?

What is next for you?

The aim of this post was to help you realise that prompt engineering has turned into context engineering. With writing a good prompt being just part of the story, what really matters is providing the right context to the model for it to carry out your request.

I’m crossing my redline on writing the first draft as Claude had generated the table below in the layout it created for this blog post. But I think it gives a clear explanation of the differences between prompt engineering and context engineering, so consider it a good reason for breaching my redline in this instance.

| Prompt Engineering | Context Engineering | |

|---|---|---|

| Scope | Single prompt / instruction | Entire information environment |

| Mindset | What to say | What the model knows |

| Goal | One high-quality response | Consistent, reliable AI systems |

Subscribe to our monthly newsletter to be kept up to date with our most recent content.